https://gist.github.com/imcomking/b1acbb891ac4baa69f32d9eb4c221fb9

def exponentially_weighted_matrix(discount, mat_len):

DisMat = np.triu(np.ones((mat_len, mat_len)) * discount, k=1)

DisMat[DisMat==0] = 1

DisMat = np.cumprod(DisMat, axis=1)

DisMat = np.triu(DisMat)

return DisMat

def exponentially_weighted_cumsum(discount, np_data):

DisMat = exponentially_weighted_matrix(discount, np_data.shape[0])

value = np.dot(DisMat, np_data.reshape(-1, 1))

return value[::-1].transpose()[0]

# 강화학습 팁

Continuous action space의 문제를 풀때는 배치사이즈가 32, 64의 수준이아니라 512, 1024 수준으로 매우 커야한다.

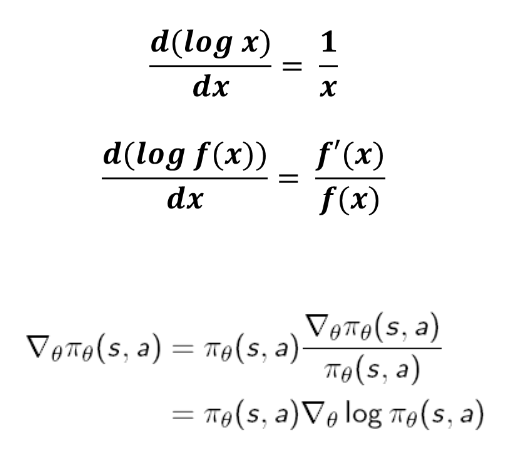

# Log-Derivative Trick

https://talkingaboutme.tistory.com/entry/RL-Spinning-Up-Intro-to-Policy-Optimization

# Terminal State에서의 value 값은 항상 0으로 정의된다.

'Research > Machine Learning' 카테고리의 다른 글

| 소리 합성 (0) | 2019.12.09 |

|---|---|

| 물리학에 기반한 모델 (0) | 2019.05.15 |

| PID 제어 (0) | 2018.11.13 |

| Belief Propagation (0) | 2018.01.05 |

| Thompson Sampling, Multi-armed bandit, Contextual bandit (0) | 2018.01.05 |

댓글